In this tutorial, we will build an advanced AI-powered research agent that can write essays on given topics. This agent follows a structured workflow:

- Planning: Generates an outline for the essay.

- Research: Retrieves relevant documents using Tavily.

- Writing: Uses the research to generate the first draft.

- Reflection: Critiques the draft for improvements.

Iterative Refinement: Conducts further research based on critique and revises the essay.

The agent will iterate through the reflection and revision process until a set number of improvements are made. Let’s dive into the implementation.

Setting Up the Environment

We start by setting up environment variables, installing required libraries and importing the necessary libraries:

pip install langgraph==0.2.53 langgraph-checkpoint==2.0.6 langgraph-sdk==0.1.36 langchain-groq langchain-community langgraph-checkpoint-sqlite==2.0.1 tavily-pythonimport os

os.environ['TAVILY_API_KEY'] = "your_tavily_key"

os.environ['GROQ_API_KEY'] = "your_groq_key"

from langgraph.graph import StateGraph, END

from typing import TypedDict, List

from langchain_core.messages import SystemMessage, HumanMessage

from langgraph.checkpoint.sqlite import SqliteSaver

import sqlite3

sqlite_conn = sqlite3.connect("checkpoints.sqlite",check_same_thread=False)

memory = SqliteSaver(sqlite_conn)Defining the Agent State

The agent maintains state information, including:

- Task: The topic of the essay

- Plan: The generated plan or outline of the essay

- Draft: The draft latest draft of the essay

- Critique: The critique and recommendations generated for the draft in the reflection state.

- Content: The research content extracted from the search results of the Tavily

Revision Number: Count of number of revisions happened till now

class AgentState(TypedDict):

task: str

plan: str

draft: str

critique: str

content: List[str]

revision_number: int

max_revisions: intInitializing the Language Model

We use the free Llama model API provided by Groq to generate plans, drafts, critiques, and research queries.

from langchain_groq import ChatGroq

model = ChatGroq(model="Llama-3.3-70b-Specdec")Defining the Prompts

We define system prompts for each phase of the agent’s workflow (you can play around with these if you want):

PLAN_PROMPT = """You are an expert writer tasked with creating an outline for an essay.

Generate a structured outline with key sections and relevant notes."""

WRITER_PROMPT = """You are an AI essay writer. Write a well-structured essay based on the given research.

Ensure clarity, coherence, and proper argumentation.

------

{content}"""

REFLECTION_PROMPT = """You are a teacher reviewing an essay draft.

Provide detailed critique and suggestions for improvement."""

RESEARCH_PLAN_PROMPT = """You are an AI researcher tasked with finding supporting information for an essay topic.

Generate up to 3 relevant search queries."""

RESEARCH_CRITIQUE_PROMPT = """You are an AI researcher refining an essay based on critique.

Generate up to 3 search queries to address identified weaknesses."""Structuring Research Queries

We use Pydantic to define the structure of research queries. Pydantic allows us to define the structure of the output of the LLM.

from pydantic import BaseModel

class Queries(BaseModel):

queries: List[str]Integrating Tavily for Research

As previously, we will use Tavily to fetch relevant documents for research-based essay writing.

from tavily import TavilyClient

import os

tavily = TavilyClient(api_key=os.environ["TAVILY_API_KEY"])Implementing the AI Agents

1. Planning Node

Generates an essay outline based on the provided topic.

def plan_node(state: AgentState):

messages = [

SystemMessage(content=PLAN_PROMPT),

HumanMessage(content=state['task'])

]

response = model.invoke(messages)

return {"plan": response.content}2. Research Plan Node

Generates search queries and retrieves relevant documents.

def research_plan_node(state: AgentState):

queries = model.with_structured_output(Queries).invoke([

SystemMessage(content=RESEARCH_PLAN_PROMPT),

HumanMessage(content=state['task'])

])

content = state['content'] if 'content' in state else []

for q in queries.queries:

response = tavily.search(query=q, max_results=2)

for r in response['results']:

content.append(r['content'])

return {"content": content}3. Writing Node

Uses research content to generate the first essay draft.

def generation_node(state: AgentState):

content = "nn".join(state['content'] or [])

user_message = HumanMessage(content=f"{state['task']}nnHere is my plan:nn{state['plan']}")

messages = [

SystemMessage(content=WRITER_PROMPT.format(content=content)),

user_message

]

response = model.invoke(messages)

return {"draft": response.content, "revision_number": state.get("revision_number", 1) + 1}4. Reflection Node

Generates a critique of the current draft.

def reflection_node(state: AgentState):

messages = [

SystemMessage(content=REFLECTION_PROMPT),

HumanMessage(content=state['draft'])

]

response = model.invoke(messages)

return {"critique": response.content}5. Research Critique Node

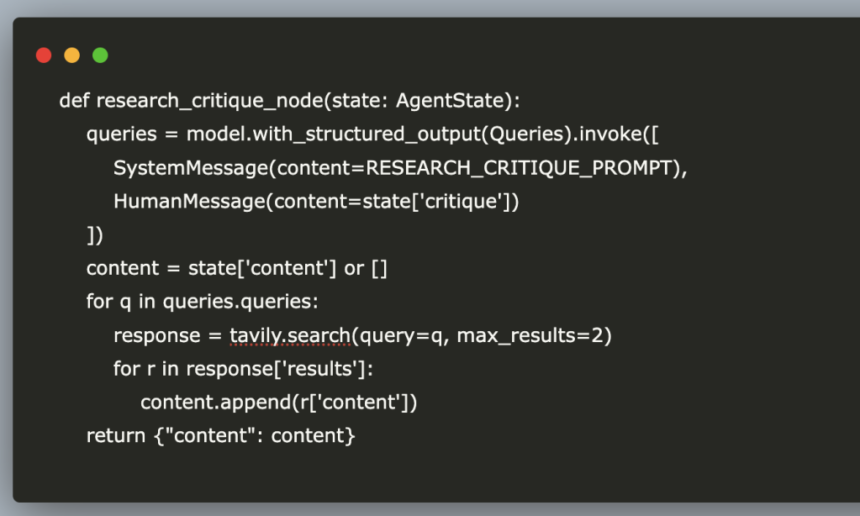

Generates additional research queries based on critique.

def research_critique_node(state: AgentState):

queries = model.with_structured_output(Queries).invoke([

SystemMessage(content=RESEARCH_CRITIQUE_PROMPT),

HumanMessage(content=state['critique'])

])

content = state['content'] or []

for q in queries.queries:

response = tavily.search(query=q, max_results=2)

for r in response['results']:

content.append(r['content'])

return {"content": content}Defining the Iteration Condition

We use the number of iterations as a condition to decide if we want to continue revising or end the loop. So the agent continues revising the essay until the maximum revisions are reached.

def should_continue(state):

if state["revision_number"] > state["max_revisions"]:

return END

return "reflect"Building the Workflow

We define a state graph to connect different nodes in the workflow.

builder = StateGraph(AgentState)

builder.add_node("planner", plan_node)

builder.add_node("generate", generation_node)

builder.add_node("reflect", reflection_node)

builder.add_node("research_plan", research_plan_node)

builder.add_node("research_critique", research_critique_node)

builder.set_entry_point("planner")

builder.add_conditional_edges("generate", should_continue, {END: END, "reflect": "reflect"})

builder.add_edge("planner", "research_plan")

builder.add_edge("research_plan", "generate")

builder.add_edge("reflect", "research_critique")

builder.add_edge("research_critique", "generate")

graph = builder.compile(checkpointer=memory)We can also visualize the graph using:

#from IPython.display import Image

#Image(graph.get_graph().draw_mermaid_png())Running the AI Essay Writer

thread = {"configurable": {"thread_id": "1"}}

for s in graph.stream({

'task': "What is the difference between LangChain and LangSmith",

"max_revisions": 2,

"revision_number": 1,

}, thread):

print(s)And we are done, now go ahead and test it out with different queries and play around with it. In this tutorial we covered the entire process of creating an AI-powered research and writing agent. You can now experiment with different prompts, research sources, and optimization strategies to enhance performance. Here are some future improvements you can try:

- Build a GUI for better visualization of working of agent

- Improve the end condition from revising fix number of times to end when you are satisfied by the output (i.e, including another llm node to decide or putting human in the loop)

- Add support to write directly to pdfs

References:

- (DeepLearning.ai)https://learn.deeplearning.ai/courses/ai-agents-in-langgraph

The post Building an AI Research Agent for Essay Writing appeared first on MarkTechPost.